The Algorithms for FPGA Implementation of Sparse Matrices Multiplication

keywords: FPGA, sparse matrices, sparse BLAS, matrices multiplication

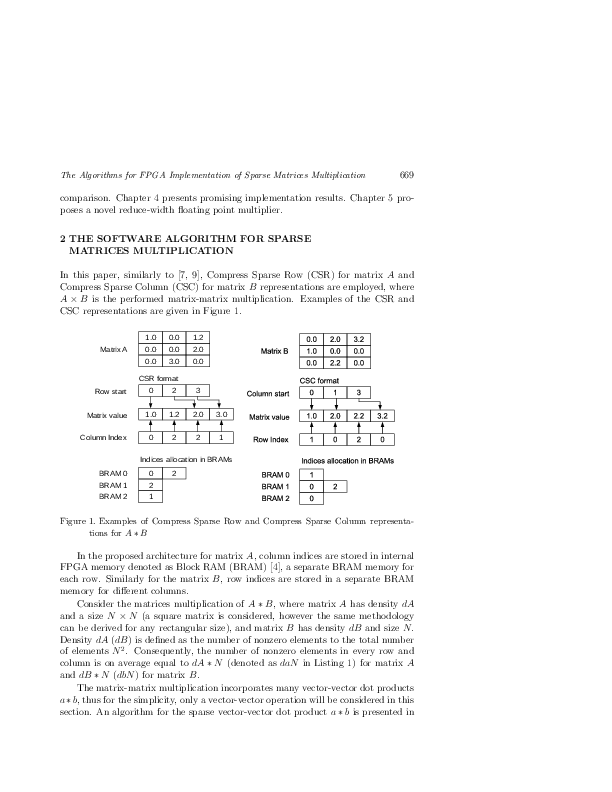

In comparison to dense matrices multiplication, sparse matrices multiplication real performance for CPU is roughly 5--100 times lower when expressed in GFLOPs. For sparse matrices, microprocessors spend most of the time on comparing matrices indices rather than performing floating-point multiply and add operations. For 16-bit integer operations, like indices comparisons, computational power of the FPGA significantly surpasses that of CPU. Consequently, this paper presents a novel theoretical study how matrices sparsity factor influences the indices comparison to floating-point operation workload ratio. As a result, a novel FPGAs architecture for sparse matrix-matrix multiplication is presented for which indices comparison and floating-point operations are separated. We also verified our idea in practice, and the initial implementations results are very promising. To further decrease hardware resources required by the floating-point multiplier, a reduced width multiplication is proposed in the case when IEEE-754 standard compliance is not required.

mathematics subject classification 2000: 65F50

reference: Vol. 33, 2014, No. 3, pp. 667–684